AI is like a double-edged sword that can eat its master

AI can bring benefits as well as disasters and losses for humans. The use of AI must be very wise and thorough.

This article has been translated using AI. See Original .

About AI Translated Article

Please note that this article was automatically translated using Microsoft Azure AI, Open AI, and Google Translation AI. We cannot ensure that the entire content is translated accurately. If you spot any errors or inconsistencies, contact us at hotline@kompas.id, and we'll make every effort to address them. Thank you for your understanding.

The following article was translated using both Microsoft Azure Open AI and Google Translation AI.

This picture was taken on January 23, 2023 in Toulouse, southwest France, showing a screen displaying the logos of OpenAI and ChatGPT. ChatGPT is an artificial intelligence conversation software application developed by OpenAI.

The sophistication of ChatGPT or AI in general also has its limitations. Two quite striking incidents have clarified the boundaries and challenges that AI faces when applied in real-world situations.

In the first case, Mark Walters, a radio host from Georgia in the United States, felt that his name had been defamed by ChatGPT. He discovered that ChatGPT had spread false information about him, accusing him of embezzlement.

This was revealed when an editor-in-chief of a publishing company asked ChatGPT to summarize a embezzlement lawsuit case. ChatGPT provided a summary stating that Walters had committed embezzlement of funds from SAF.

Also read: Future Potential Opened by AI

/https%3A%2F%2Fasset.kgnewsroom.com%2Fphoto%2Fpre%2F2023%2F03%2F07%2F14586c41-04f1-4b48-a23c-622dfbd95827_jpg.jpg)

The utilization of an artificial intelligence-based application, ChatGPT, was conducted in an office in Jakarta on Tuesday (7/3/2023). ChatGPT is an AI chatbot in the form of a generative language model that utilizes transformer technology to predict the probability of the next sentence or word in a conversation or text command.

However, according to the lawsuit filed by Walters, all statements in the summary made by ChatGPT are fictitious. In his effort to seek justice, Walters is now suing OpenAI for compensation. This is the world's first lawsuit against AI. The lawsuit was filed in early June 2023.

The second case occurred when a lawyer who was representing a case against a Colombian airline used ChatGPT to prepare the lawsuit. Steven Schwartz used ChatGPT to cite previous cases that could be used as precedents. However, ChatGPT instead 'quoted' fictional and beautifully fabricated cases. Last Friday (23/6/2023), Schwartz was fined.

These two cases depict a phenomenon referred to as hallucination by the AI observer community. AI does not provide facts, but rather a series of sentences that seem rational and pleasant to read.

Not only that, in October 2012, before the use of ChatGPT, the search engine algorithm technology and machine learning used by Google were widely reported by the public regarding cases of racism.

/https%3A%2F%2Fasset.kgnewsroom.com%2Fphoto%2Fpre%2F2019%2F02%2F24%2Ff8701564-5ab5-4faa-ae2a-434cbea5f002_jpeg.jpg)

A woman tries facial recognition technology owned by Oneconnect, a technology service provider company for the financial industry in Jakarta, Wednesday (20/2/2019)

Quoting an independent public institution that advocates for transparency and openness in AI systems, The AI, Algorithmic and Automation Incidents and Controversies (AIAAIC), in October 2012, Professor of Communication at the University of Southern California Anneberg, Safiya Noble, found discrimination against women with colored skin in Google's search engine. Her research cited that searching on Google using the keyword "Black Girls" yielded mostly pornographic pages.

At that time, Google responded by stating that racial and gender stereotypes in its products were isolated incidents. Google then updated its search algorithm to make these stereotypes less visible.

The face, voice, and object recognition technology are reported to bring harm to society. Plastic Forte, a manufacturing company based in Alicante, Spain, was fined by the country's data protection authorities in April 2003 for violating their employees' privacy using face recognition technology.

Furthermore, Amazon was fined $31 million by the US Federal Trade Commission (FTC) in May 2023 for storing children's voice and location data using the Alexa personal assistant for voice recognition algorithm improvement.

The traffic monitoring camera technology in the state of Kerala, India, to detect traffic violations in June 2023 was also found to report many errors. This system often mistakenly identifies screws or bolts on license plates as the number zero and automatically issues fines.

These cases serve as a warning about the importance of being cautious and diligent when using AI systems such as ChatGPT, facial recognition, voice recognition, or object recognition, especially in situations where accuracy and truth are crucial. Although AI can be very beneficial, there are still limitations and risks that need to be considered.

Also read: Artificial Intelligence: A Tool or a Threat?

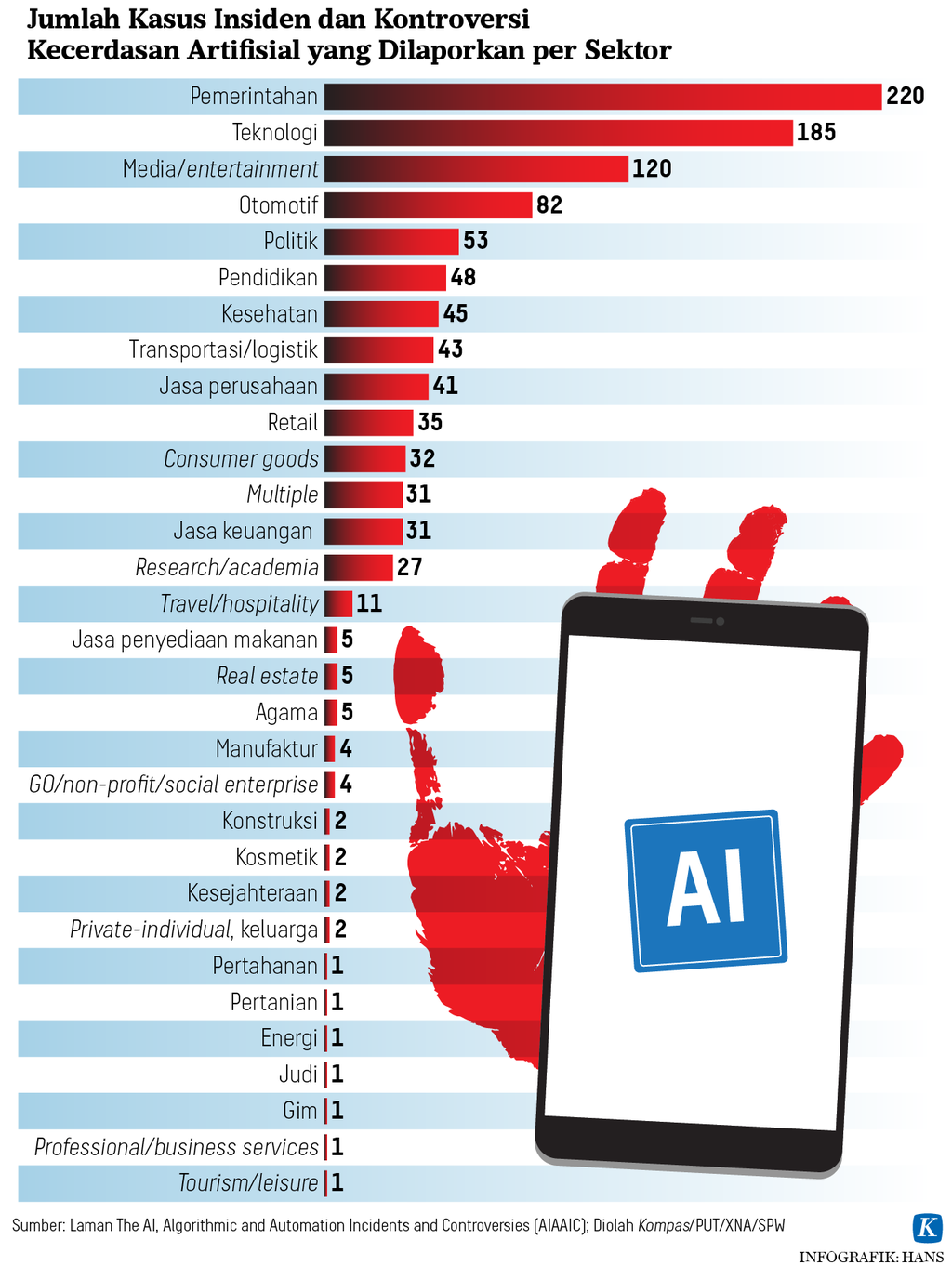

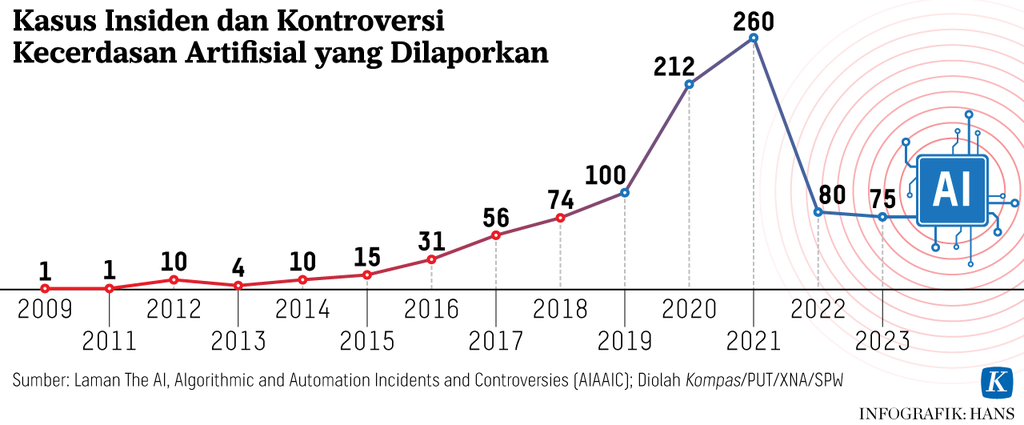

Referring to the AI Index Report 2023 from AIAAIC Directory, the number of AI incidents and controversies reported in 2021 has increased 26 times compared to 2012. In 2012, there were around 10 reports, which increased threefold to 31 cases in 2016, and in 2021 it reached 260 cases.

Several countries have issued regulations regarding privacy and security. In 2020, Latvia established restrictions on the development of artificial intelligence for commercial companies, associations, and foundations for national security. The rule is stated in the "Amendments to the National Security Law 2020."

These two cases depict a phenomenon known as hallucination within the AI observer community. AI does not provide facts, but instead presents a series of sentences that seem reasonable and pleasant to read.

The US government allows each state to create regulations regarding privacy and security based on the potential danger in each state. For example, Alabama will ban government agencies from using facial recognition as the sole basis for criminal investigations in 2022.

At the end of March 2023, the Italian government temporarily banned the use of ChatGPT. The reason is that Open.AI as the developer of ChatGPT does not have adequate privacy protection for user data and does not have rules for underage users.

However, in April 2023, the ban was lifted. Open.AI has added an age verification tool to ensure that users are at least 13 years old. ChatGPT's privacy policy is now accessible to users before they register for Chat GPT.

Also read: The Benefits of AI Are Still Low in Developing Countries

Reducing risk

China is using a transparency approach to reduce the risk of misuse of AI technology. The regulations issued in 2022 regulate the use of online algorithms for marketing to private companies.

The regulation mandates companies to inform users about the use of AI for marketing purposes. It also prohibits the use of customer financial data to market the same product at different prices.

Canada, under the "The Artificial and Data Act" (June 2022), requires AI developers to create mitigation plans to reduce risks and increase transparency when using AI in high-risk situations. The mitigation plan must ensure that the technology used does not violate laws and anti-discrimination measures.

The mitigation of AI development risks has been called for by thousands of experts, executives, and technology industry observers through a petition signed on March 31, 2023. They are urging a six-month suspension of artificial intelligence development, allowing time to develop joint security protocols.

/https%3A%2F%2Fasset.kgnewsroom.com%2Fphoto%2Fpre%2F2023%2F06%2F14%2F47c89e19-1dd4-412b-beac-28ade178aa40_jpg.jpg)

From left to right: CTO of GDP Venture On Lee, CEO of OpenAI Sam Altman, Indonesian Minister of Education, Culture, Research, and Technology Nadiem Makarim, and Chairman of Korika Prof Hammam Riza after the event "Conversation with Sam Altman" held in Jakarta on Wednesday (14/6/2023). In this event, Altman gave his opinion on AI development.

The European Union (EU) Parliament on June 14, 2023, has approved regulations concerning safe and transparent AI. The regulation prohibits AI systems with high-risk levels that can threaten human safety. Among them are systems that use manipulative techniques intentionally exploiting human vulnerability and used for social score, socio-economic status, and personal characteristics. The rules also prohibit biometric categorization systems that use characteristics such as gender, race, ethnicity, citizenship status, religion, political orientation, as well as police investigation systems based on profiles, location, or past criminal behavior.

The EU regulations also require AI developers to conduct public testing and register AI development models in the EU database before launching them in the EU market. Generative AI systems such as ChatGPT must comply with transparency requirements and help distinguish between fake and genuine images. A summary of the copyright of data used for training must also be made available to the public.

However, to encourage AI innovation, regulatory exemptions are made for research activities and AI components provided by licensed open-sources websites.

AI does indeed help some tasks become more efficient and increase human productivity. However, in many cases, caution and precision are needed, and it is not one hundred percent recommended to entrust all work to digital AI machines. We must not let AI become a double-edged sword that could potentially backfire.

Also read: Life "Leyeh-leyeh" with AI