Artificial Intelligence and the Future of Humanity

Imagine, there is a robot which is offended by our words or actions and, because it is not equipped with a moral program to forgive minor mistakes, it suddenly slaps us in the back.

On 12 May 2022, twenty researchers from DeepMind, a technology company seeking to develop artificial intelligence (AI) technology, published a report on the latest developments in what they've been working on so far.

In the paper entitled "A Generalist Agent", they report that they have succeeded in creating a robotic agent named Gato that is able to perform many tasks like humans, from playing video games, annotating pictures, chatting and arranging stones to providing contextual text responses.

The creation of Gato is considered the beginning of the birth of artificial intelligence technology able to match the level of human intelligence.

Also read:

> Artificial Intelligence Involves Cross-Study Programs

Responding to the opinion of people who are pessimistic about the development of Gato, Nando de Freitas, research director of Deep-Mind, even said on his Twitter account: “The Game is Over! It's about making these models bigger, safer, [more] computer efficient, faster at sampling, [with] smarter memory, more modalities.”

Challenge for humanity

At first glance, the artificial intelligence project does appear to be a “threat” for the future of mankind.

Imagine, there is a robot which is offended by our words or actions and, because it is not equipped with a moral program to forgive minor mistakes, it suddenly slaps us in the back. How many people would suddenly be slapped by robots hired as shopkeepers just because the customers bid too cheap?

This means that if we are no longer able to distinguish between machine behavior and human behavior, then the machine has the same intelligence as humans.

The discourse on AI, from the beginning, was based on human standards. The Turing Test, for example, designed by Alan Turing to test machine intelligence, measures its difference from humans. This means that if we are no longer able to distinguish between machine behavior and human behavior, then the machine has the same intelligence as humans.

In other words, a machine is said to be intelligent if and only if it is able to imitate human behaviour, so that we who observe it are no longer able to tell the difference between them. By the standard of "imitation", it is as if artificial intelligence projects are designed to compete with, or even replace, the position of humans. Machines are projected to do many of the same jobs better than humans.

Imagine how many workers would be unemployed if most of the world's economic wheels could be driven by machines. This is a dystopia in itself for the future of the workers.

Challenge for philosophy

In addition to the challenges for humanity, the latest developments in AI also pose challenges for philosophy as a discipline. To respond to this challenge, a new branch of philosophy has emerged, called the "philosophy of AI".

In the scientific context, the development of AI technology generates new questions that have never existed before in the history of philosophy: what is the nature of intelligence? What is the difference between the ontological status of the natural intelligence of the human species and the artificial intelligence of machines?

Or is intelligence just a matter of behavior and information processing, and have nothing to do with mental phenomena at all?

Can the information obtained from the work of AI be called "knowledge"? Do moral decisions based on AI processing information also contain an ethical imperative that must be obeyed? Does the presence of intelligence indicate the presence of mind/consciousness? Or is intelligence just a matter of behavior and information processing, and have nothing to do with mental phenomena at all?

These questions are some of the new areas that have emerged as a result of the development of AI that require study and exploration from philosophers.

In the current discourse of AI philosophy, there is one basic distinction that is widely accepted, namely the distinction between a strong version of AI (strong AI) and a weak one (weak AI).

Also read:

> Us and Artificial Intelligence

Strong AI is a program that aims to create machines that are fully human-like, including their mental aspects. This means that this strong AI is not only good at calculating and making strategic decisions, but is also able to feel inner experiences like humans.

Weak AI, on the other hand, aims to create information-processing machines that only look the same as humans, but are not essentially the same, because they do not have the mental and conscious aspects we do.

Some seem optimistic that a weak or strong version of AI will be achievable someday -- if not now. However, a US philosopher, John Searle, made a mind experiment that proved that our intelligence is not only the ability to display intelligent behavior, but also the ability to understand. It is this capacity to perceive that machines can never imitate.

Computers may exhibit intelligent behavior by responding appropriately to our requests. For example, if we ask the computer something, it can answer correctly. However, it will never be able to understand the meaning of what we ask and also the answers it gives itself -- just like a person who does not understand Mandarin, but can answer Chinese questions based on manuals.

The computer works only according to the program, just as the person who does not understand Mandarin but can answer the written questions using the manual book. Both of them did not understand what they received and conveyed yet they were able to do their job properly.

Interdisciplinary collaboration

Searle's experiment constitutes a challenge in itself for the AI project: can computer intelligence still be called intelligence even though it is not accompanied with ability to understand like human intelligence? That is, it is a philosophical interruption to the AI project. Philosophy makes responses; that is one way that philosophy should act in the midst of this increasingly crazy technological development.

Like the questions I mentioned above, there are many conceptual problems related to the development of artificial intelligence that need to be addressed by philosophy.

In that context, philosophy functions as a critique. However, philosophy is not just a critique. Philosophy must also be constructive -- by, for example, offering ideas or solving conceptual problems in the technology development process. Like the questions I mentioned above, there are many conceptual problems related to the development of artificial intelligence that need to be addressed by philosophy.

Therefore, in order that the development of AI technology does not become a boomerang for humanity, philosophy needs to carry out its function as a critic and at the same time as a provider of constructive direction. However, to do all this, philosophy also needs to be aware that it cannot work alone. Philosophy needs to collaborate with experts from other disciplines, such as psychology, biology, computer science, anthropology and sociology.

Thus, philosophy can become a “bridge of sciences” as I aspire to be and become a major part of the development of the idea of “university”: an educational institution that unites many kinds of knowledge.

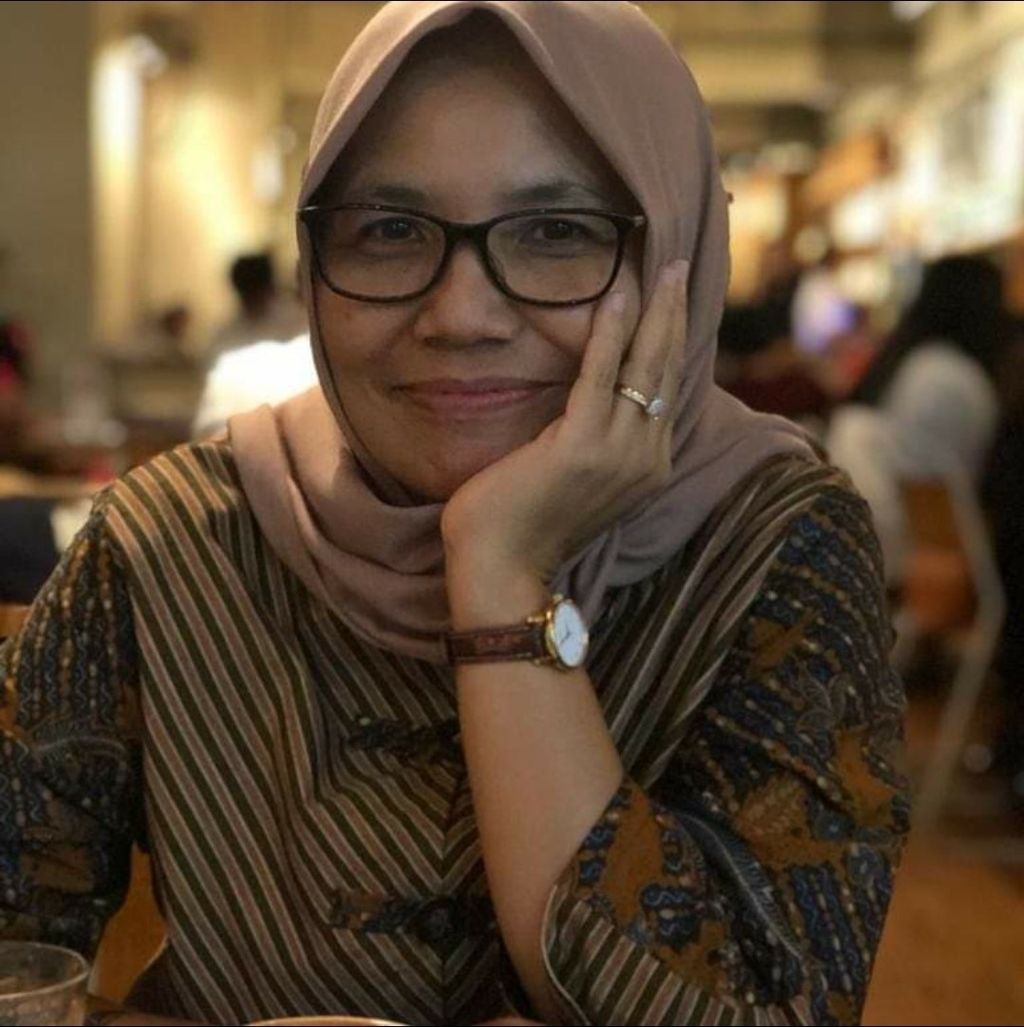

Siti Murtiningsih

Siti Murtiningsih,Dean of the Faculty of Philosophy, Gadjah Mada University.

(This article was translated by Kurniawan Siswo)